Visual Texture

Identification of Different Car Seat Headrests with CNNs

For the identification for headrests in automotive seats for a leading European tier-1 supplier NEA-ROB for Headrest Inspection vision system was developed. The solution is based on computer vision and AI using deep neural networks presenting an accuracy rate of 99.95%.

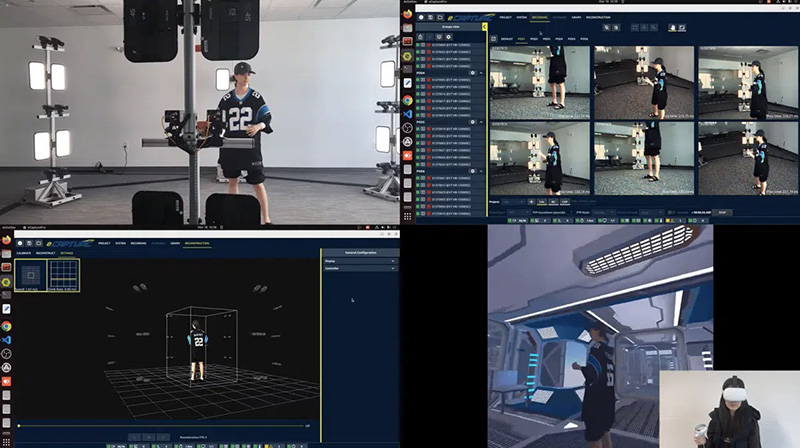

Besides the analysis of complex objects (textured and geometry 3D complex) more restrictive analysis thresholds were needed. This type of approuch was applied by Neadvance to develop an automatic solution for identification of headrests by generating a unitary code, meeting the requirements of productive traceability postulated by the OEM from the tier 1 supplier. The solution consists of a robotised system that identifies the different backrest models through visual characteristics extracted from the images of these models. 101 distinct models of backrests are distinguished by morphology (eleven distinct volumes), material (type, texture, markings) and seat stitch (type, colour).

Shape and Texture Analysis

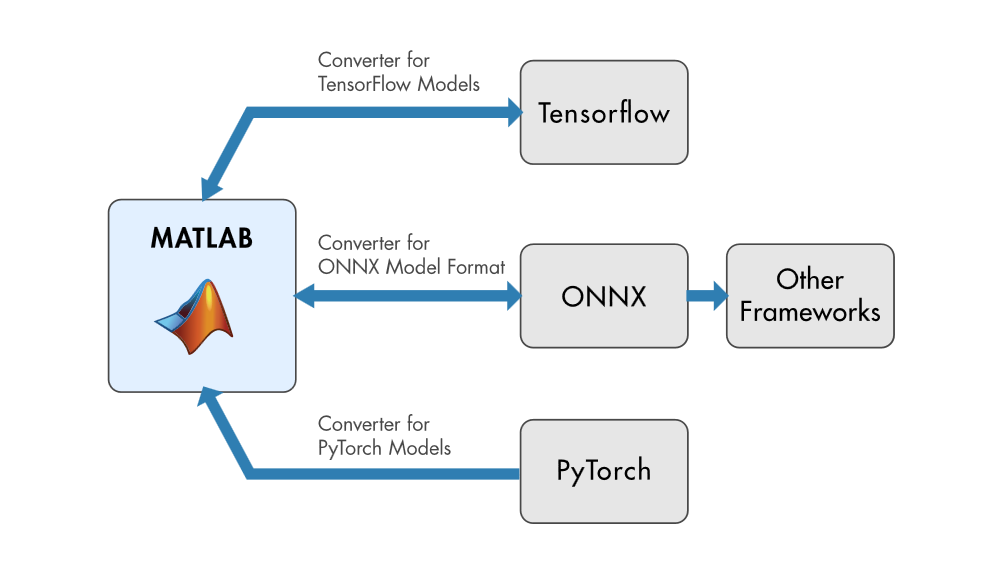

The research in shape and texture analysis originated a diverse range of techniques for extracting characteristics of the images both in the spatial domain and in the spectral domain, which when combined with the design of specific classifiers allowed the identification and classification of the same objects. Concerning the classifiers applied, the approaches are diversified, highlighting those based on methods derived from the domain of statistics and AI. With the actual maturation of deep neural networks in particular with CNN’s (convolutional neural networks) and the improvements in GPU technology these methods are now manageable. The different topologies of CNN’s are state of the art in the domain of texture analysis, recognition of patterns and objects. There are several network typologies, e.g. ResNet, DenseNet and Inception. The application described implements an Inception V3 ConvNet.

Accuracy Rate of 99.95%

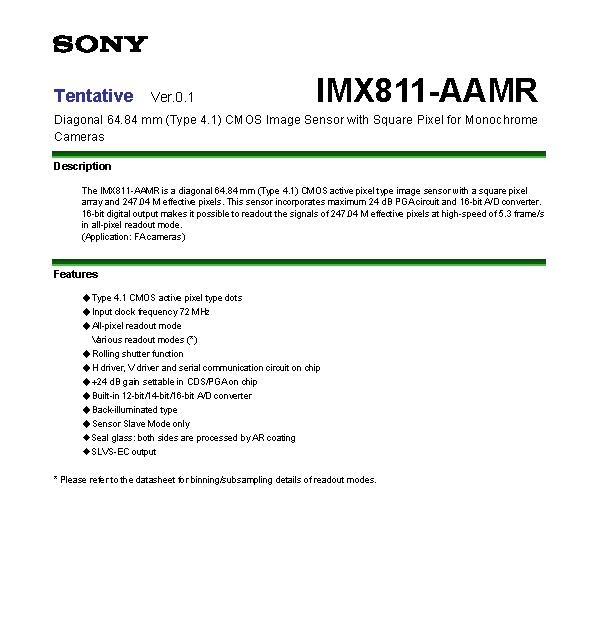

The identification system is based on the characteristics extracted from the volume, material and seat stitch. Two acquisition zones for the robotised vision system were identified: (a) acquisition with cameras and fixed illumination; (b) acquisition with cameras and lighting placed on the robot gripper. In the first area, images are acquired for the identification of the type of volume while the second one acquires images for the identification of the type of material and seat stitch. The different materials included three groups: fabric, synthetic leather and leather. Within these groups, some subgroups relate to the colour and the type of texture pattern. The image database of the front top is composed of 24 classes (distinct fabrics, synthetic leather and leather with various colours). The texture analysis is based on Deep CNN’s. In the training phase, ten high resolution images were acquired per class, with a total of 240 images. As a texel element, a standard image section is defined for all material types. To obtain the incoming images on the network a sliding window detection, was applied to the images acquired from all models, resulting in 55,000 image sections per class. The architecture used for the identification/classification process was Inception V3. The hyper-parameters and the base model of the CNN network were adjusted during training using the referred database. At the end of training the Inception V3 network presents a 100% classification accuracy of the validation set. The CNN model was integrated into the NEA-ROB for Headrest Inspection, which includes an IPC, two high resolution cameras (one in 16bit), a high-power-LED-illumination and a robotic structure. The first phase of testing was carried out with images acquired with the machine outside the production line. The 24 classes of material types were submitted to the vision system, generating a total of 5,000 test texels per class. The accuracy rate for this test data was 99.95%. The system is already fully integrated into the production line.

Summary

The Castinspector allows the identification of model variants of car seat backrests, specifically the identification of the type of material based on its visual texture. The system is based on Deep CNN’s, whose input vector consist of texels of optimized image sections. Currently, the system is in the process of online validation in the manufacturing unit at a tier 1 supplier, presenting an accuracy rate of 99.95%. Neadvance is already planning to roll this technology out to other customers (tier 1 and 2 suppliers) and a wide range of different products and different industries.